I’m planning on building a new home server and was thinking about the possibility to use disc spanning to create matching disk sizes for a RAID array. I have 2x2TB drives and 4x4TB drives.

Comparison with RAID 5

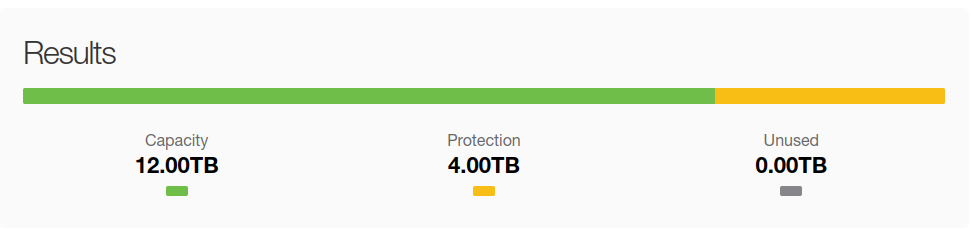

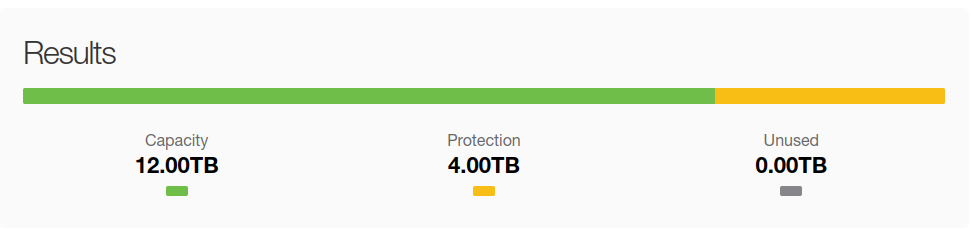

4 x 4 TB drives

- 1 RAID array

- 12 TB total

4 x 4 TB drives & 2 x 2 TB drives

- 2 RAID arrays

- 14 TB total

5 x 4* TB drives

- Several 4TB disks and 2 smaller disks spanned to produce a 4 TB block device

- 16 TB total

I’m not actually planning on actually doing this because this setup will probably have all kinds of problems, however I do wonder, what would those problems be?

Typical problems with parity arrays are:

Unrelated to parity: